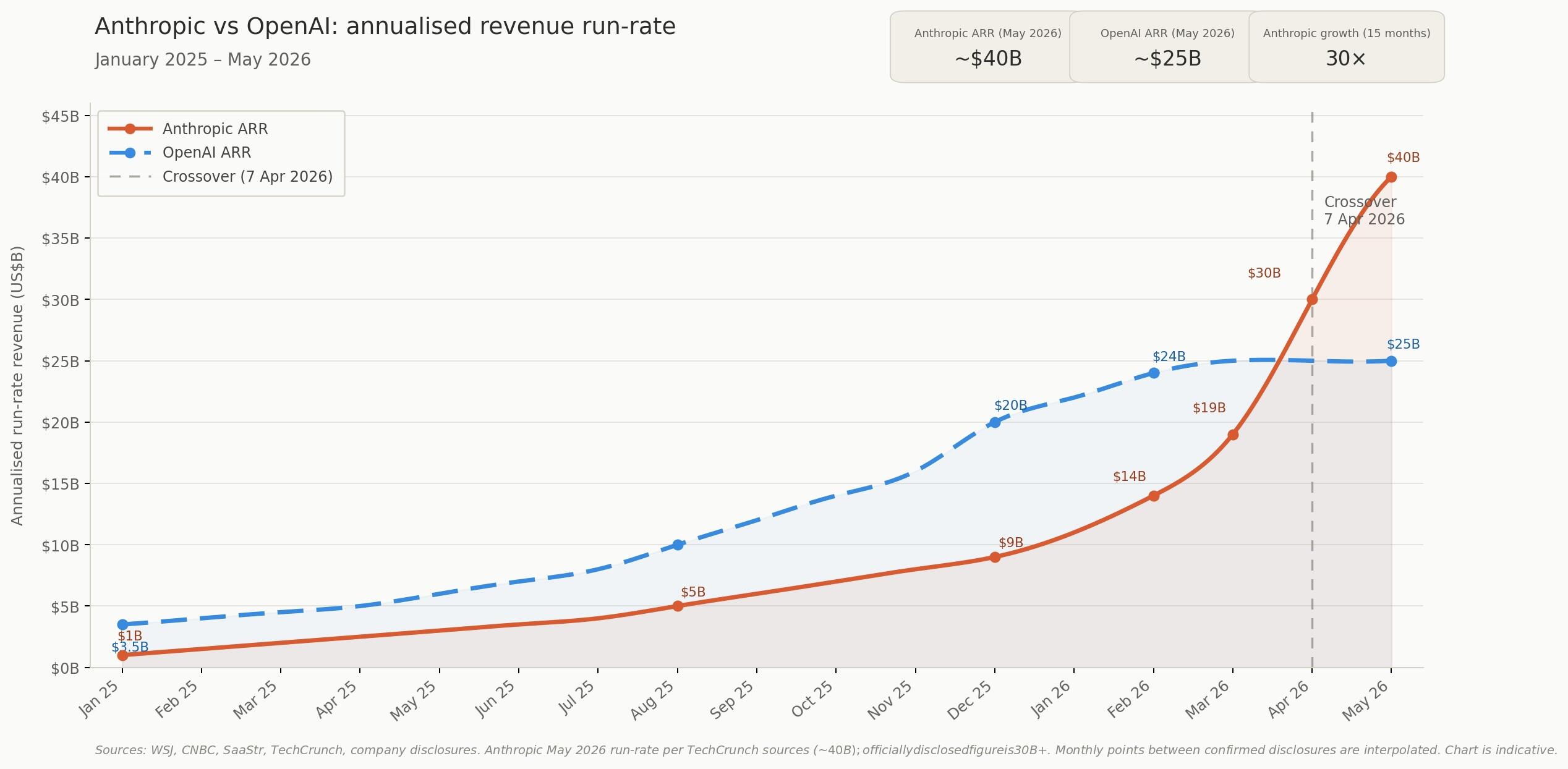

Anthropic has overtaken OpenAI on revenue, is days from a board decision on a US$900 billion valuation, and just secured a compute deal with its most vocal critic. Here's a rundown of its growth to-date:

Sometime in the first week of April, a four-year-old lab most consumers have never heard of quietly passed the world's most recognised tech company on revenue.

Anthropic - the San Francisco outfit behind the Claude model family - hit US$30 billion in annualised run-rate on April 7, edging past OpenAI's US$25 billion for the first time since ChatGPT launched in late 2022, a crossover analysts had pencilled in for August at the earliest.

Going from US$1 billion in annualised revenue in January 2025 to $30 billion by April 2026 is a 30-fold expansion in 15 months - a growth arc that took Salesforce the better part of t

Sources with direct knowledge of the financials put the actual figure closer to $40 billion, and a board decision on a ~$50 billion raise at a $900 billion valuation is expected this month - more than double the $380 billion set in February.

Why the numbers keep climbing

Behind every funding round is the same cost driver: silicon.

Demand has been running ahead of Anthropic's processing capacity for months, and the lab has been stacking supply agreements to close the gap.

In late April, Google committed up to $40 billion in Anthropic - $10 billion immediately and $30 billion contingent on performance milestones - alongside a 5 GW Google Cloud capacity commitment starting in 2027.

Amazon separately expanded its arrangement to up to $25 billion and 5 GW of its own, with nearly 1 gigawatt arriving by end of 2026, and a Microsoft and NVIDIA partnership added $30 billion in Azure capacity to the stack.

Combined with a $50 billion U.S. data centre build with Fluidstack across Texas and New York, Anthropic has assembled a 10 gigawatt forward supply - enough reserved processing headroom to power several mid-sized cities.

The problem is timing: almost none of it arrives before late 2026 at the earliest, and demand from Claude's paying user base has been compressing against existing infrastructure in the interim.

That gap is what Anthropic moved to close on May 6.

The lab signed a deal with SpaceX to take all available capacity at Colossus 1 - the 300-megawatt Memphis facility SpaceX's xAI division built to train Grok, currently running well below utilisation - delivering more than 220,000 NVIDIA GPUs within the month.

Within hours, Claude Code rate limits were doubled, peak-hour caps lifted for paid subscribers, and API throughput increased for Opus model users - the first hardware agreement in recent memory to produce same-day product changes.

The deal carries a notable backstory: Elon Musk spent much of last week in federal court testifying in his lawsuit against OpenAI, while simultaneously leasing his AI cluster to OpenAI's closest revenue rival - a company he labelled "misanthropic" as recently as February.

Musk said his decision followed time spent with senior Anthropic staff last week, writing on X that everyone he met "cared a great deal about doing the right thing."

For context on the competitive scale, OpenAI's infrastructure commitments run considerably larger, with CEO Sam Altman disclosing that Stargate and bilateral agreements with Nvidia, AMD, and Oracle total an eye-whopping $1.4 trillion over eight years - 3X the entire U.S. IPO market's combined output from 2016 to 2025.

Two playbooks, one clear gap

The revenue crossover matters, though what it reveals about each company's underlying structure is more consequential.

Anthropic derives roughly 80% of its top line from corporate contracts, with more than 1,000 businesses spending over $1 million annually on Claude - a count that doubled between February and April alone, as eight of the Fortune 10 joined the client list.

OpenAI runs on consumer volume: 900 million weekly ChatGPT users, of whom only around 5.5% pay for a subscription, leaving the company carrying the compute bill for every free query across its user base.

Those structural differences are showing up clearly in each company's forward financials.

Anthropic is projecting positive cash flow by 2028, with its burn rate falling to approximately 9% of revenue by 2027 as enterprise contract volumes compound.

Financial documents reviewed by The Wall Street Journal put OpenAI's operating losses at $74-85 billion in 2028 - roughly three-quarters of that year's projected revenue - with breakeven not arriving before 2030, a timeline HSBC Global Research considers optimistic.

Wall Street moves in

On May 4, Anthropic announced a $1.5 billion joint venture with Blackstone, Hellman & Friedman, and Goldman Sachs to deploy Claude engineers directly inside mid-sized businesses across the firms' combined portfolio networks, with Apollo Global Management, General Atlantic, GIC, and Sequoia Capital also backing the vehicle.

The structure embeds Anthropic's engineering staff inside client operations - a forward-deployment model placing the venture in direct competition with established consulting firms for corporate AI implementation work.

For every dollar corporations spend on software, they spend six on services - a materially larger market than licensing alone.

Hours before that announcement, Bloomberg reported OpenAI was standing up an identical vehicle - a services entity called The Development Company, co-funded with TPG and Bain Capital - both labs reaching the same conclusion on the same day about where sustainable margin is most likely to come from.

Those margin assumptions feed directly into the IPO valuation being put to Anthropic's board this month.

At US$900 billion against a top-line of roughly $40 billion, the implied price-to-revenue multiple sits between 22x and 30x, in line with prior infrastructure-layer platform cycles.

Goldman Sachs, JPMorgan, and Morgan Stanley are in early discussions on an IPO that Bloomberg has reported could arrive as early as October 2026, with OpenAI tracking a similar timeline at a ~$1 trillion target valuation.

Sustaining those multiples in the market will depend on two variables: whether Anthropic's corporate client base holds against open-source pricing pressure.

China's DeepSeek delivers roughly 90% of frontier capability at ~1/50th of the API cost - and whether OpenAI converts enough of its 900 million unpaid users to paying accounts before its infrastructure commitments compound further.