Anthropic has sued the United States Government to prevent it from being placed on a national security blacklist in an escalation of their dispute over how its technology can be used by the military.

The privately-owned artificial intelligence (AI) model developer filed a complaint in the U.S. District Court for the Northern District of California on Monday (Tuesday AEDT) seeking to overturn a Pentagon decision that labelled Anthropic a “supply chain risk”.

In the filing, the company, which is known for its Claude chatbot, said the decision violated its constitutional rights, including free speech and due process protections.

“These actions are unprecedented and unlawful. The Constitution does not allow the government to wield its enormous power to punish a company for its protected speech,” Anthropic said.

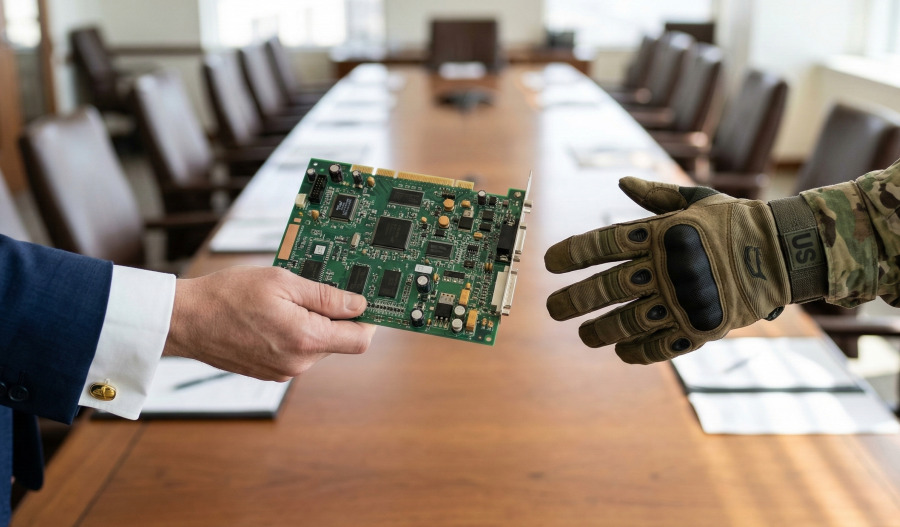

The Pentagon on Thursday formally placed a supply-chain risk designation on Anthropic after the company refused to remove guardrails preventing the use of its technology for autonomous weapons or domestic surveillance.

This means defence contractors and suppliers have to assert they do not use Anthropic’s AI tools in work related to the Defense Department.

The company has sought a formal review of the Department’s determination in the U.S. Court of Appeals in Washington, D.C.

U.S. President Donald Trump last month directed government agencies to stop using the company’s technology and wrote in a post on his Truth Social site: “WE will decide the fate of our Country — NOT some out-of-control, Radical Left AI company run by people who have no idea what the real World is all about.”

Anthropic said the decision was damaging its business as government contracts were being cancelled and commercial deals may also be in danger.

Anthropic had positioned itself as a partner to U.S. national security agencies as they seek to adopt cutting-edge AI technology.

Although Chief Executive Dario Amodei has said the company does not oppose the development of AI-driven weapons, it does not see current models as being reliable enough to be used autonomously in combat.

Anthropic was founded in 2021 by former OpenAI researchers, including Amodei and his sister Daniela Amodei, and was valued at US$380 billion (A$538 billion) in its latest fundraising round.